Crawl Log Analysis for Technical SEO Insights

Most technical SEO audits focus on crawlability issues after they manifest in Search Console. The real optimization happens upstream, in server log analysis. Crawl log analysis reveals the mechanical reality of how search engines interact with your infrastructure, not just what they report back to you.

My view: crawl logs are the most underutilized diagnostic tool in technical SEO. They contain the raw data that determines coverage, budget allocation, and resource prioritization decisions. The gap exists because most SEOs treat logs as retrospective reports rather than forward-looking system instrumentation.

Core Measurement Framework for Crawl Log Analysis

Three primary dimensions drive technical SEO decisions from crawl log data: coverage efficiency, budget utilization, and resource prioritization. Each dimension requires specific metrics and different analytical approaches.

Coverage Efficiency Metrics

Coverage measures how comprehensively crawlers map your site structure. The standard coverage ratio (crawled pages divided by total pages) misses the architecture story. I would instrument these metrics instead:

- Depth coverage: Percentage of pages crawled at each URL depth level

- Template coverage: Crawl frequency across different page templates

- Content freshness coverage: Correlation between content update frequency and crawl frequency

- Discovery latency: Time between page publication and first crawler visit

Budget Utilization Analysis

Crawl budget represents computational resource allocation by search engines. Most sites waste budget on low-value pages while starving high-value pages of crawler attention. The standard approach focuses on total crawl volume, but resource allocation efficiency matters more.

My framework centers on crawl density rather than absolute numbers:

- Value-weighted crawl density: Crawl frequency weighted by page business value

- Response code efficiency: Ratio of successful crawls (2xx) to total crawl attempts

- Redirect chain depth: Average number of redirects per successful page discovery

- Server response time distribution: Latency patterns that may throttle crawler behavior

Resource Prioritization Signals

Crawlers make implicit prioritization decisions based on site architecture signals. Understanding these patterns enables better resource allocation architecture. The key signals I would track:

- Internal link equity distribution: How crawl frequency correlates with internal link depth and frequency

- Update frequency recognition: Whether crawlers adapt their revisit patterns to content update schedules

- Canonical consolidation effectiveness: How well crawlers follow canonical directives across URL variations

- Sitemap priority compliance: Whether declared sitemap priorities influence actual crawl frequency

Technical Implementation Architecture

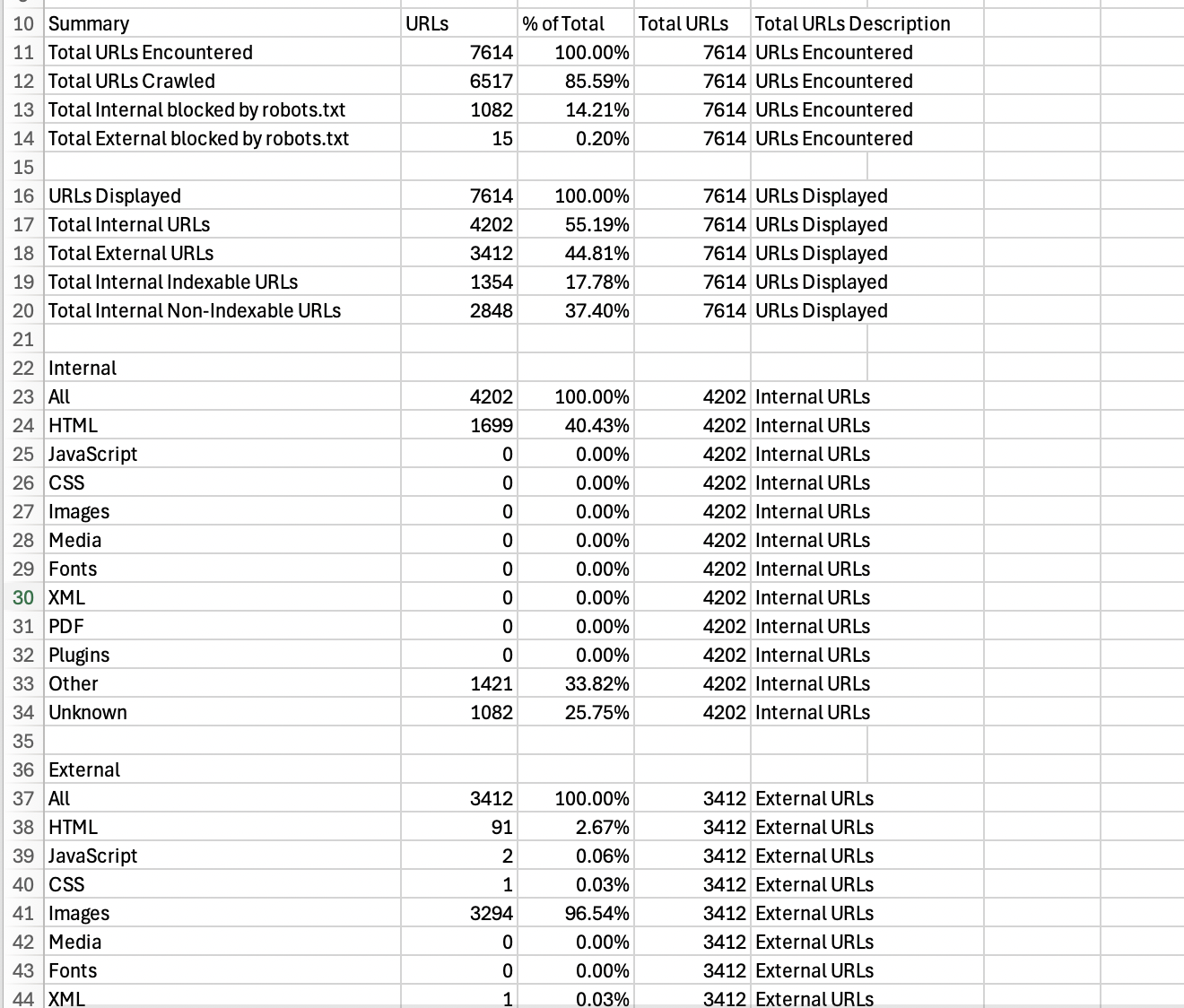

Crawl log analysis requires processing large volumes of semi-structured data. The architecture needs to handle log ingestion, parsing, normalization, and pattern recognition at scale. Two primary tooling approaches dominate the market.

Specialized SEO Tools

Screaming Frog Log File Analyser represents the purpose-built approach. It structures log data specifically for SEO decision-making, with built-in filters for response codes, user agents, and URL patterns. The tool handles common log formats (Apache, IIS, nginx) and provides SEO-specific reporting views.

The strength: immediate SEO context without custom configuration. The limitation: processing constraints on large log files and limited customization for complex site architectures.

Enterprise Log Analytics Platforms

Splunk and similar platforms offer more processing power and customization options. These systems excel at pattern recognition across massive datasets and can correlate crawl behavior with other performance metrics.

My hypothesis: enterprise platforms become necessary when crawl log analysis integrates with broader performance monitoring systems. The additional complexity pays off when you need to correlate crawler behavior with server performance, user behavior, and business metrics.

Response Code Pattern Recognition

Response codes in crawl logs reveal infrastructure health patterns that impact SEO performance. The standard approach focuses on error rates, but response code timing and clustering provide deeper insights.

Error Pattern Analysis

404 errors in crawl logs indicate either broken internal links or outdated crawler knowledge. The pattern distribution matters more than absolute numbers:

- Clustered 404s: Multiple 404s from the same URL pattern suggest systematic issues

- Temporal 404 spikes: Sudden increases in 404s often correlate with site migrations or CMS changes

- Persistent 404s: URLs that generate 404s across multiple crawl sessions indicate stale internal links

Redirect Chain Optimization

3xx response codes reveal redirect architecture efficiency. Crawler behavior changes significantly based on redirect chain length and consistency. My approach focuses on redirect consolidation rather than elimination:

- Chain depth distribution: Map how often crawlers encounter 1-hop, 2-hop, and 3+ hop redirect chains

- Redirect consistency: Whether the same source URL consistently redirects to the same destination

- Redirect target accessibility: Response codes for final redirect destinations

Crawl Frequency Optimization Strategy

Crawl frequency patterns reveal how search engines prioritize your content architecture. The optimization goal is aligning crawler behavior with business priorities, not maximizing absolute crawl volume.

Content Freshness Correlation

Pages with regular content updates should receive proportionally higher crawl frequency. The correlation strength indicates how effectively your site architecture communicates content freshness to crawlers.

I would measure this through update-to-crawl latency: the time between content modification and next crawler visit. High-value pages with poor latency scores indicate structural optimization opportunities.

Template-Level Crawl Allocation

Different page templates serve different business functions and should receive different crawl frequencies. Product pages might need daily crawling during inventory updates, while static content pages might only need weekly attention.

The analysis framework maps crawl frequency to template types, revealing whether crawlers understand your content hierarchy. Misaligned crawl allocation suggests internal linking or XML sitemap optimization opportunities.

Integration with Broader Technical SEO Systems

Crawl log analysis provides maximum value when integrated with other technical SEO data sources. The combination reveals system-level optimization opportunities that single-source analysis misses.

Search Console Integration

Search Console reports what search engines communicate back to site owners. Crawl logs show what actually happened during crawl sessions. The discrepancies between these data sources often reveal the most actionable optimization opportunities.

Coverage discrepancies particularly expose indexation issues. Pages that appear frequently in crawl logs but remain absent from Search Console coverage reports typically have content quality or technical accessibility issues.

Site Performance Correlation

Server response times in crawl logs correlate with broader site performance metrics. Slow responses to crawler requests often predict poor user experience metrics, making crawl log analysis an early warning system for performance issues.

My approach involves correlating crawler response times with Core Web Vitals data. Pages that consistently show slow response times to crawlers typically need performance optimization that benefits both SEO and user experience.

Decision Framework for Crawl Log Implementation

Not every site needs comprehensive crawl log analysis. The implementation complexity and ongoing maintenance overhead require clear business justification. My decision framework considers three factors.

Site Scale and Complexity

Sites with more than 10,000 pages or complex URL structures benefit most from systematic crawl log analysis. Smaller sites might achieve better ROI from manual log review during specific optimization campaigns.

Technical Resource Availability

Crawl log analysis requires ongoing technical attention. The system needs regular maintenance, pattern recognition expertise, and integration with existing SEO workflows. Organizations without dedicated technical SEO resources should start with purpose-built tools rather than enterprise platforms.

Business Impact Potential

The optimization potential depends on current crawl efficiency. Sites already showing strong coverage and appropriate crawl frequency patterns have limited upside. Sites with obvious crawl budget waste or coverage gaps show immediate optimization opportunities.